AI agents are cybersecurity firms' newest employees

Cybersecurity firms are being tasked with implementing AI agents across their teams. But the technology's capabilities remain limited.

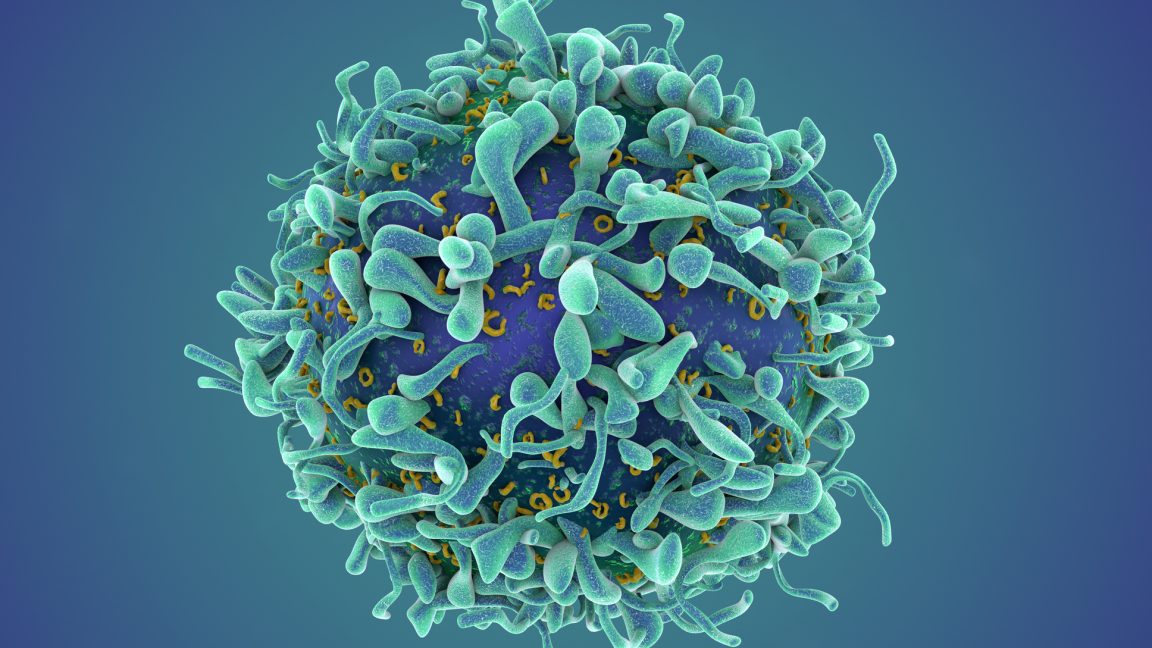

Rawlstock/Getty Images

- Security firms are using AI agents to manage cyber threats and reduce analyst workloads.

- Huntress uses AI agents for threat detection, cutting investigation time and workload by 90%.

- AI agents have limitations with intricate tasks, requiring human oversight in high-risk situations.

As cyber threats grow more complex, security firms are facing an ever-increasing workload while struggling to hire qualified analysts.

To keep up, some are onboarding a new kind of labor: AI agents.

Unlike generative AI tools like ChatGPT, which rely on prompts, AI agents are assigned specific roles and trained to execute multi-step workflows.

The shift toward agentic workflows is already underway. In a 2025 McKinsey survey, 62% of respondents said their organizations are experimenting with AI agents. In cybersecurity, adoption is also rising. Research from ISC2, a cybersecurity nonprofit, found that 30% of professionals reported embedding AI security tools into their operations. Many of these systems are evolving into agent-like tools that can execute multi-step workflows once handled by human analysts.

AI in Action explores how companies are implementing AI innovations.

Now, cybersecurity firms are being tasked with implementing these systems across their teams.

Early results show promise. But the technology's capabilities remain limited, raising questions about how quickly AI agents can scale in high-stakes environments — and what that means for workers and their jobs.

Taking on threat detection

Huntress, a cybersecurity platform, has rolled out just under 20 AI agents across its security operations center, or SOC, which manages threat alerts for 240,000 customers, said Eric Stride, the company's chief security officer.

Stride said the agents automate investigations that were once handled manually by its 50-person SOC team. In its identity threat detection and response process, an agent detects suspicious signals, including unusual login activity and unauthorized inbox rules. That signal triggers an orchestration agent, an AI "supervisor" for task delegation, that launches 12 sub-agents to pull data, analyze activity, and identify evasion techniques.

The orchestration agent determines whether activity is malicious or benign, and escalates unclear cases to a human analyst. After a quality check, the system drafts an incident report for the client.

Stride told Business Insider that the process typically takes 20 to 30 minutes manually, but can now be completed in minutes. Stride said the system has reduced analyst workload by 90% for more than a third of investigations and generates about 10,000 incident reports a month.

For analysts, that shift means spending less time triaging alerts and more time investigating complex attacks.

"Our SOC analysts now have their 'Iron Man suit' to be more effective against the adversary," Stride said.

Agents move into customer support

Mikey Pruitt, head of AI labs at DNSFilter, said the company launched an AI agent across its customer support team of fewer than 10 engineers, which now handles all inbound Tier 1 tickets.

When a customer submits a ticket, the agent categorizes the email based on its level of complexity. It then resolves routine issues — such as confusion about a product feature — using internal documentation. More complex tickets are escalated to human staff.

Pruitt said the process takes about four minutes.

While a human typically handles 35 tickets a week, Pruitt said an agent can complete 60 requests, saving support engineers up to three hours per week.

"They love it," he said. "They don't want to be bothered by mundane tasks."

Where AI agents fall short

Despite early gains, AI agents still have clear limitations.

At Huntress, Stride said his agents struggle with vague tasks and can produce inconsistent or inconclusive answers. They perform well on repeatable tasks but are less effective at identifying and disrupting complex threats like ransomware attacks. They also can't make high-risk decisions without human oversight.

Pruitt said DNSFilter's agent is limited to internal documentation and can struggle with specialized knowledge. Early on, it mistakenly advised a customer to bypass a reseller partner, its main point of contact, to resolve an issue.

"That was definitely a fail that we had to engineer around," Pruitt said.

Why companies are betting on AI agents

Still, the economics are compelling.

Pruitt said an AI agent costs about $15,000 to $16,000 a year to operate and performs the workload of two full-time support engineers.

"We're saving the company $200,000 a year by deploying this one agent," Pruitt said. "Hiring less people is definitely part of the strategy."

As a result, Pruitt anticipates that DNSFilter will scale back hiring of entry-level staff. As the AI agent's capabilities advance to address more complex support tasks, he envisions a future in which his customer support team transitions into roles as engineers or quality assurance specialists within the company.

For now, both companies see agents as a way to scale output without increasing head count.

"What we are trying to do is make our team of about 150 perform like a team of 500," Pruitt said. "By the end of the year, we'll get there."

Read the original article on Business Insider